- #WEBSITE DATA EXTRACTOR ONLINE HOW TO#

- #WEBSITE DATA EXTRACTOR ONLINE PDF#

- #WEBSITE DATA EXTRACTOR ONLINE UPGRADE#

- #WEBSITE DATA EXTRACTOR ONLINE SOFTWARE#

- #WEBSITE DATA EXTRACTOR ONLINE SERIES#

It presents a series of steps that can be used to automate the collection of online HTML tables and the transformation of those tables into a more useful format. This guide seeks to address the first challenge.

#WEBSITE DATA EXTRACTOR ONLINE PDF#

Pleasantly formatted tables trapped within the unreachable hellscape of a PDF document.Horrendous, multi-page HTML structures embedded in a series of consecutive webpages and.Investigators who work with public data face many obstacles, but two of the most common are: In fact, governments sometimes obfuscate data intentionally to prevent further analysis that might reveal certain details they would rather keep hidden. This can be an issue even in cases where governments are compelled by Freedom of Information (FoI) laws to release data they have collected, maintained or financed. In other words, a PDF that contains a photograph of a chart drawn on a napkin is less useful than a Microsoft Excel document that contains the actual data presented in that chart. Leveraging public data for an investigation typically requires that it be not only machine readable but structured. While we are clearly seeing a proliferation of such information online, it is not always available in a format we can use. Meaningful investigations have made use of everything from live flight tracking data to public registeries of companies to lobbying disclosures to hastags created on Twitter, among countless other examples. This process is often called "Web scraping." It lets you save up to five active projects and will store the extracted data for 14 days.This guide presents a series of steps that can be used to automate the collection of online HTML tables and the transformation of those tables into a more useful format. With a free account, Parsehub promises to fetch data from up to 200 pages per run in 40 minutes. Alternatively, you can check out the app’s dashboard where it lists interactive, written and video tutorials for guidance.

#WEBSITE DATA EXTRACTOR ONLINE SOFTWARE#

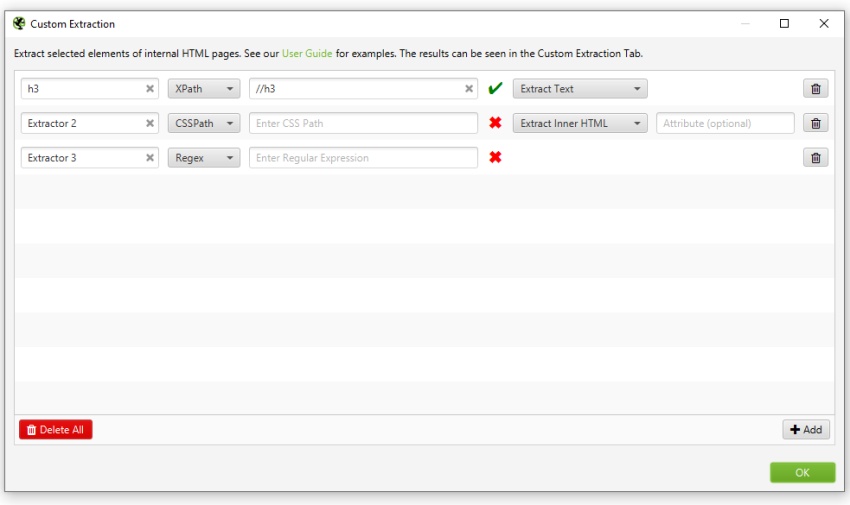

It is recommended that you follow the tutorial after installing the software and the documentation on the Parsehub website to know what each function does. You can perform various actions like click, input, hover, loop, etc to browse, navigate and collect information on the web page. For instance, you can use Parsehub to load a Twitter user page to save the tweets posted on that account, along with the URL and time stamp. You have to create a flowchart of how information needs to be copied from a website – from the loading the website in the built-in browser, entering text, scrolling through content, clicking on specific links and copying content from there as well, and so on. Parsehub | Parsehub works in a similar fashion.

#WEBSITE DATA EXTRACTOR ONLINE UPGRADE#

The upgrade will even give you access to readymade templates to mine content from social media accounts, travel websites, maps, job sites, etc. The cloud-based service is a paid upgrade and better suited when dealing with lots of data. The free account lets you crawl and scrape unlimited webpages and save up to 10,000 records per file export. The resulting table can be exported as an Excel, CSV, JSON and as a MySQL database file. Now whenever you run the task, Octoparse will follow the steps to extract data. When you complete making the workflow, run the ‘project’ to see if it works correctly, modify the tasks if needed and save it. You can also configure Octoparse to handle a website that is paginated-requires mouse clicks to go to the next page-or uses infinite scrolling. Once you select an element (e.g., product names, price, Twitter handles…), Octoparse will automatically detect all other similar elements from the page. Click on the page elements you want to extract and Octoparse will automatically select similar elements on the rest of the page. You have to create a step-by-step ‘workflow’ of how you want the web crawling bot to browse a web site, identify content (images, URLs, text, etc) and place them into a table format. The former is free and suitable for small projects where the wait times are around 30-45 minutes. Here are three web scraping tools that you can try for free: Octoparse | Octoparse can use your computer’s resources to extract data or use its own server compute to harvest data from multiple web pages. Once this workflow is created and tested, it will run through the series of instructions and collect data for you while you tend to other work.

#WEBSITE DATA EXTRACTOR ONLINE HOW TO#

What remains common across all such tools is that you need to spend some time ‘teaching’ it how to collect the data from a web page. Each web scraping tool offers a certain set of features and options in the way it processes data, saves it and for how long it is stored. The data is then stored in a spreadsheet format before it is saved online or on the computer. What does a web scraper do? A web scraper is an automation solution that extracts text, web links, images and videos from a website.